5 min read

ETSIIT Technical Challenge | From College Students to Entrepreneurs

Lacey Trebaol

:

January 20, 2015

This post was written by the winners of the TechChallenge: Israel Blancas Álvarez, Ignacio Cara Martín, Nicolás Guerrero García, and Benito Palacios Sánchez.

A year ago, we started our work for the IV ETSIIT Technical Challenge (video). Who are we? Well, we are four students studying Electrical Engineering and Computer Science at the University of Granada in Spain.

Our team, Prometheus, won the Tech Challenge sponsored by RTI. For this challenge, teams of four or five students had to create a product to solve a challenge proposed by an external company. The theme for this year's challenge was "Multi-Agent Video Distributed System."

We joined this challenge for practical experience. One year after the Tech Challenge, we are all still students, but we have now researched a business opportunity, designed the competitive product Locaviewer, developed a strategy to sell it in the market and created a working prototype in addition to the course work required for our degrees.

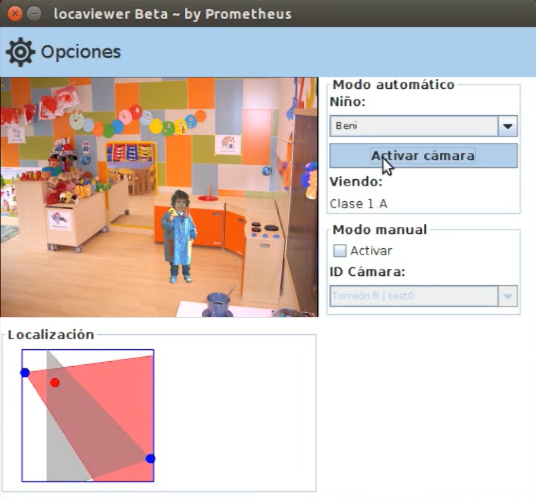

Locaviewer

Most parents with children in a nursery school worry about their children's health and progress. Our product, Locaviewer, attempts to provide parents to track and see their child in real time. As part of our marketing plan, we created a promotional video. Our code has been released under MIT license on GitHub.

Team Organization and Schedule

The project took us approximately 250 hours to complete. Each week we met for at least 4 hours, with the exception of the last month where we spent 20 hours/week on the project. To be more efficient, we divided into two teams. Two people worked on the indoor Bluetooth location algorithm. The other two focused on an application to capture, encode/decode a video stream, and share it using RTI Connext DDS.

Location Algorithm

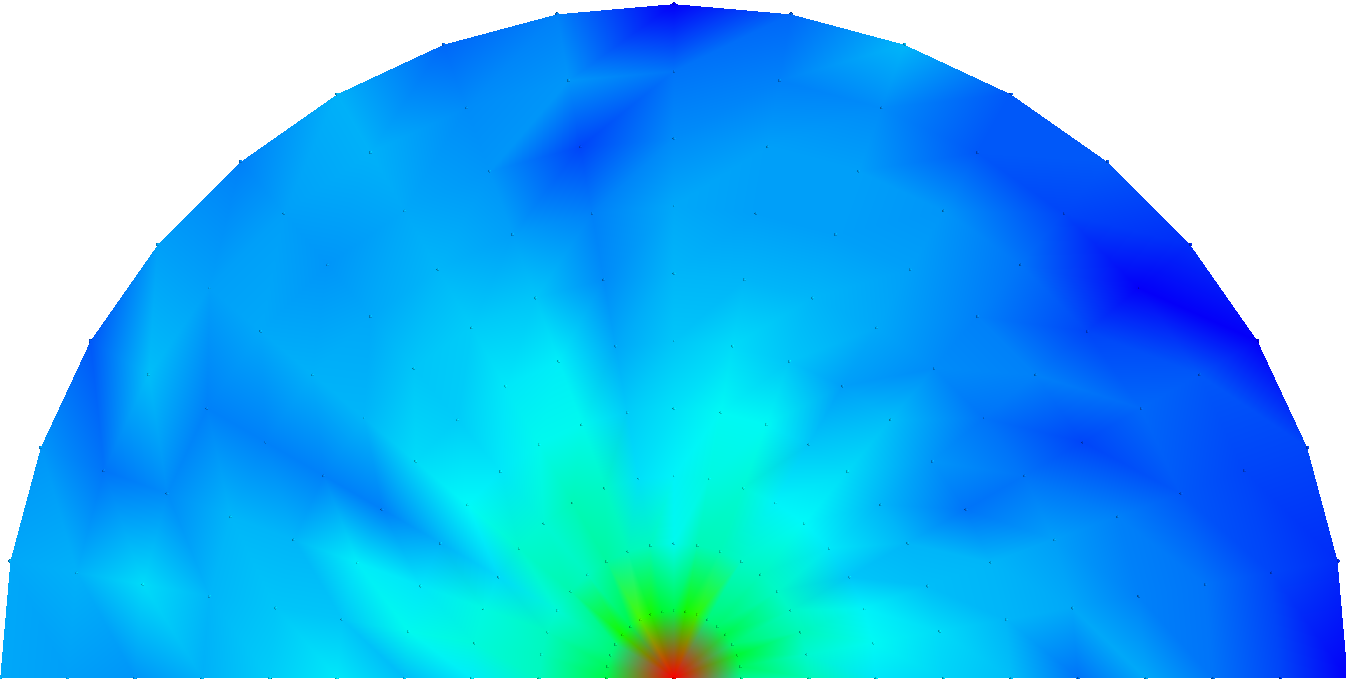

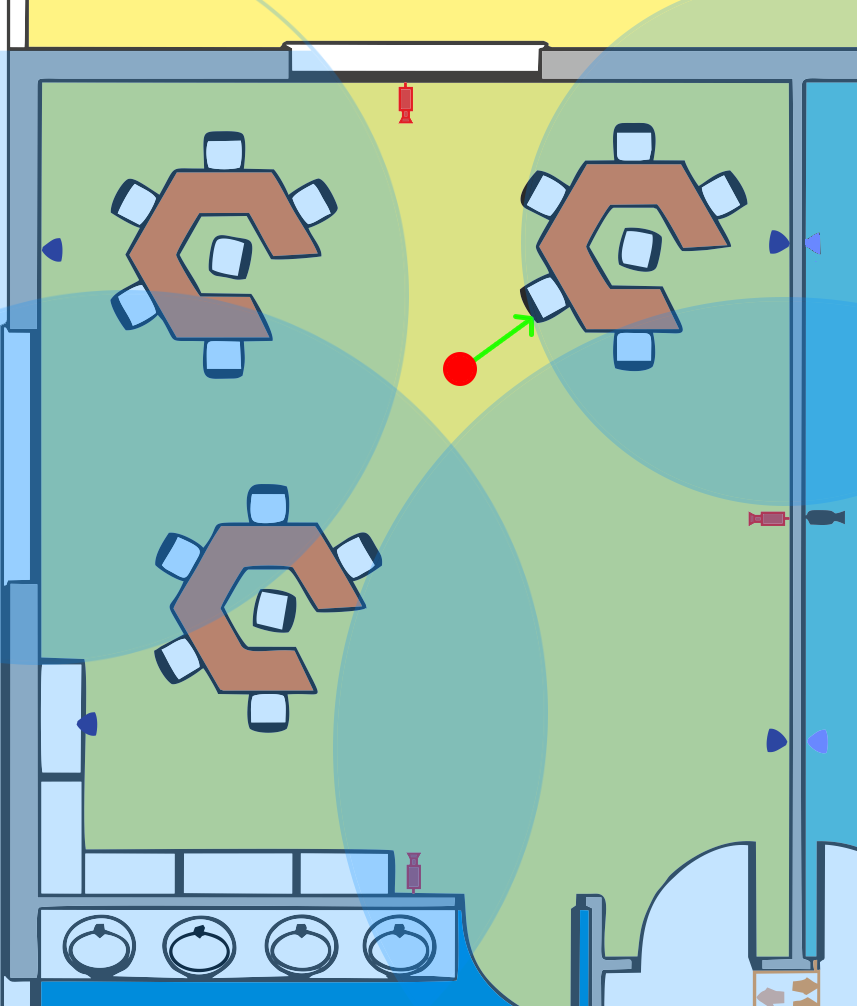

The first and most important step of our solution was to determine the location of the children inside the nursery school. Each child needed to wear a wristband with a Bluetooth device -sensor-, which continually reported the signal power received a Bluetooth device -dongle-, placed in the room walls. This Received Signal Strength Indication (RSSI) value is usually measured in decibels (dB). We determined the relationship between RSSI and distance.

The RSSI values were transmitted to a minicomputer (Raspberry Pi or MK802 III) to run a triangulation algorithm and identify the child's location. Since we knew the camera position, after determining the position of the child, we knew which cameras were recording the child and selected the best camera.

Video Recording Application

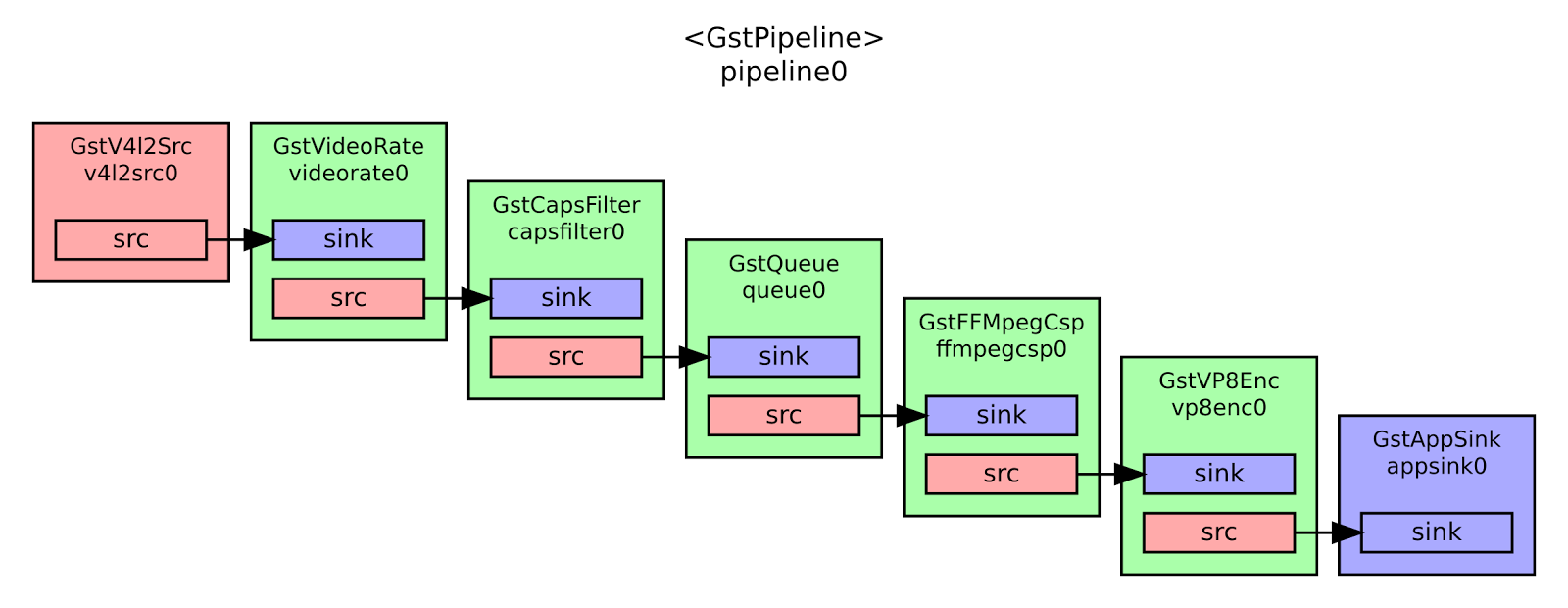

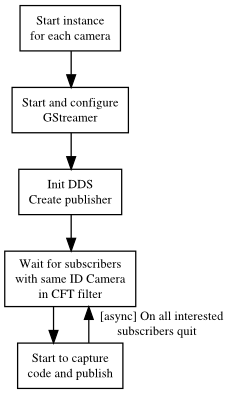

To record, encode, decode and visualize video we used GStreamer for Java. We tried other libraries such as vlcj but they didn't support Raspberry Pi or satisfy the real-time constraints of our system. After some research, we discovered GStreamer which worked with Raspberry Pi, and could easily get the encoded video buffer in real time (using AppSink and AppSource elements). This allowed us to encapsulate it and send it to a DDS topic. We worked on this for several months, even, implementing a temporary workaround with HTTP streaming using vlcj until we settled on our final approach.

We used the VP8 (WebM) video encoder. Since the wrapper for Java only works with GStreamer version 0.10, we could not optimize it, and had to reduce the video dimensions. Our tests used Raspberry Pi, but we plan to use an MK802 III device in the final implementation because it has the same price but more processing power. The final encoding configuration was:

We used the following Java code to create VP8 encoder elements.

Element codec = ElementFactory.make("vp8enc", null);

codec.set("threads", 5);

codec.set("max-keyframe-distance", 20);

codec.set("speed", 5);

Element capsDst = ElementFactory.make("capsfilter", null);

capsDst.setCaps(Caps.fromString("video/x-vp8 profile=(string)2"));On the client side, we used the following configuration:

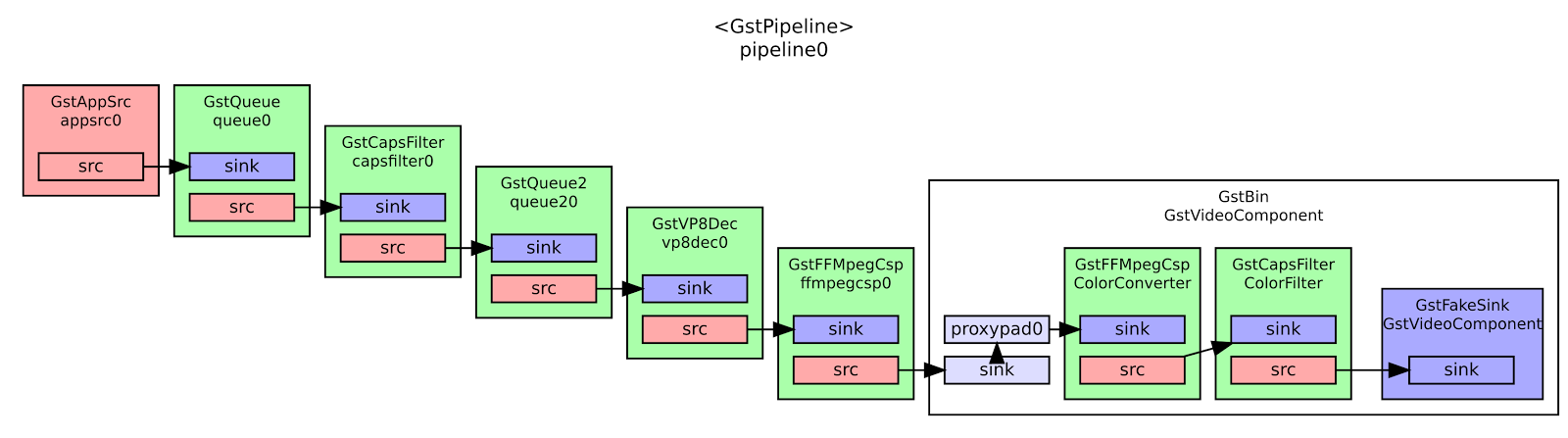

We used the following Java code to create VP8 decoder elements.

String caps = "video/x-vp8, width=(int)320, height=(int)240, framerate=15/1";

Element capsSrc = ElementFactory.make("capsfilter", null);

capsSrc.setCaps(Caps.fromString(caps));

Element queue = ElementFactory.make("queue2", null)

Element codec = ElementFactory.make("vp8dec", null);

Element convert = ElementFactory.make("ffmpegcolorspace", null);We also tried JPEG encoding, but this was not feasible for real-time use due to the larger size and greater number of packets.

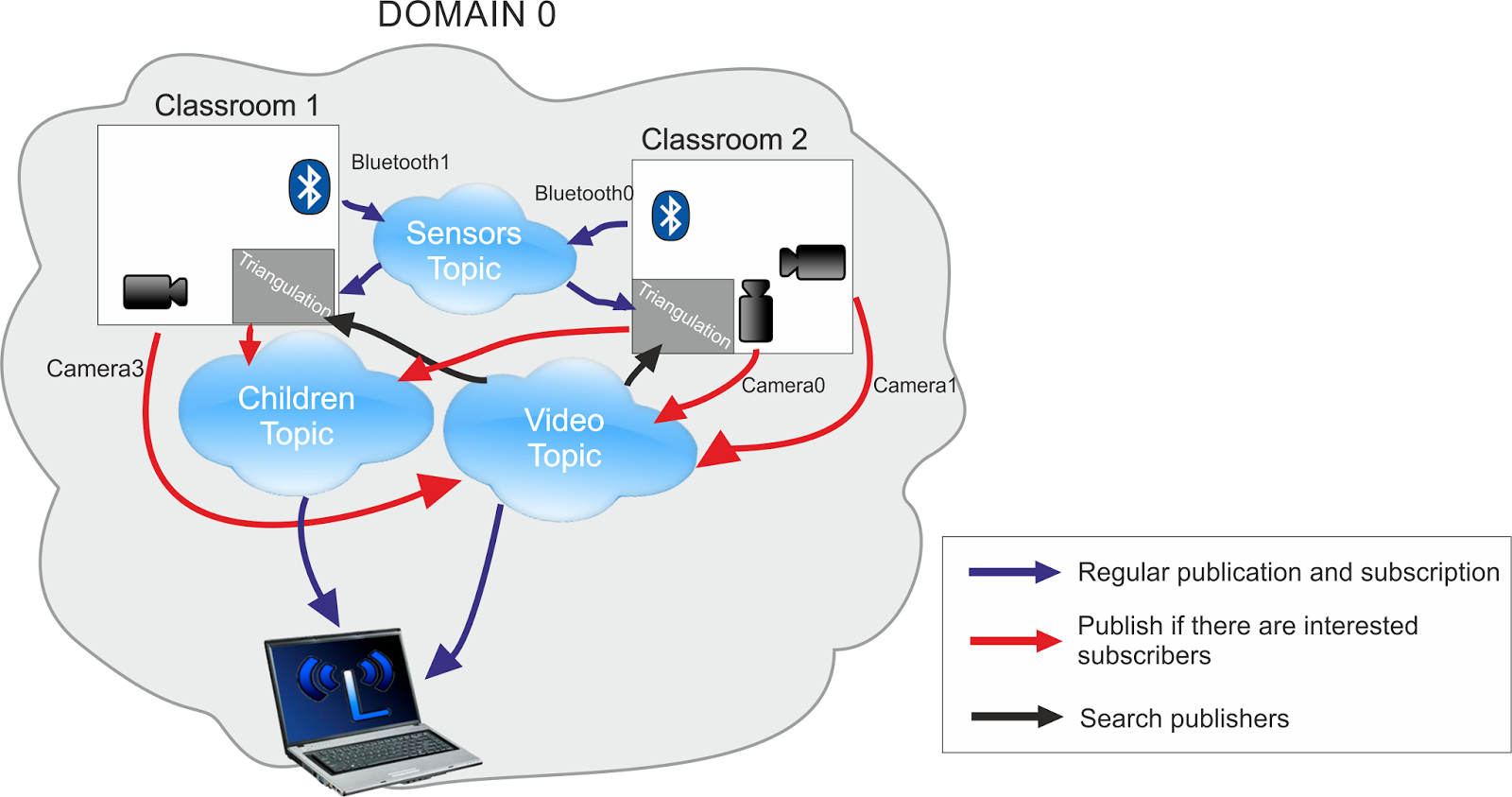

DDS Architecture

The publish-subscribe approach was key to our solution. It allowed us to share data between many clients without worrying about network sockets or connections. We just needed to specify what kind of data to send and receive. We created a wrapper library, DDStheus, to abstract DDS usage in our system.

Our final solution was composed of six programs that shared three topics. We used different programming languages:

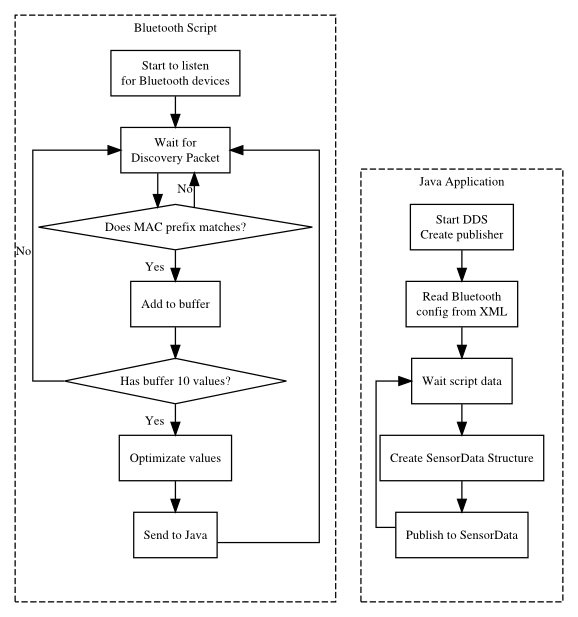

- Python to work at low level (HCI) with Bluetooth devices

- MATLAB/Octave to make the triangulation script

- Java to work with RTI Connext DDS and graphical user interfaces

We needed to know all the RSSI values in a room. We created a script to configure the Bluetooth dongles and get the RSSI information. These values were sent to a Java program using a simple socket connection in the same machine. The Java application published the data in the Sensor Data topic. It sent Child ID (the sensor Bluetooth MAC), Bluetooth dongle ID and position, current room (as a key to filter by room), RSSI value and expire time.

After the cameras recorded and encoded the video, the Java program Gava sends the video via the Video Data topic. It sent the camera ID as a key value to filter the stream using ContentFilteredTopic with camera position, room, encoded frame and codec info.

Furthermore, the application put the camera ID, room and camera position in the USER_DATA QoS value of each video publisher. The triangulation minicomputer could then get all the camera info in a room just by discovering publishers. It could also detect new and broken cameras in real time and update the location script to improve the camera selection algorithm.

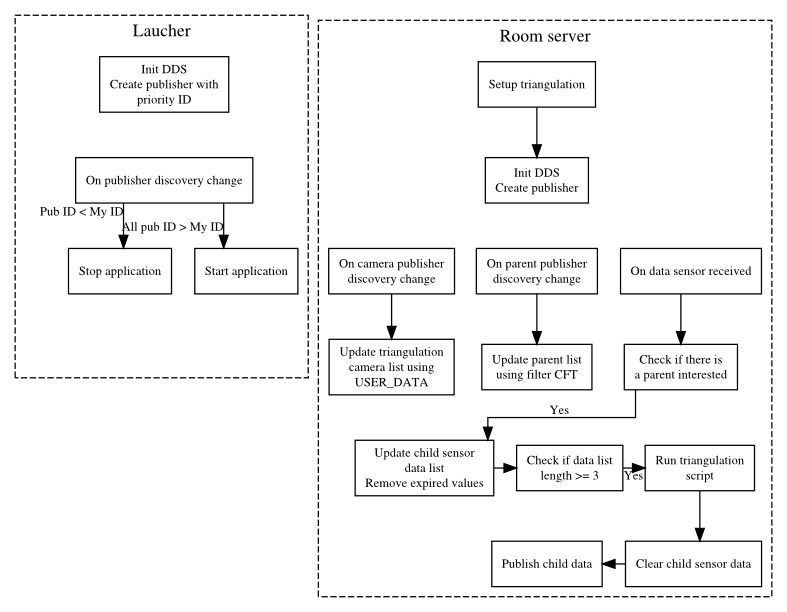

In the last step, we processed the data and wrote the result as Child Data topic. This was done by the room server (implemented with Raspberry Pi or MK802 III) that triangulated the child location and selected the appropriate camera. It filtered only the sensors in the current room and gathered all video publisher info in that room. The data was sent to an Octave script, which returned the child's location and best camera ID. The information sent to the cloud with the topic Child Data, included child ID, video quality, camera ID, child location and room ID. For efficiency, the child ID and quality are sent as keys that can be filtered against or used for sorting video.

To optimize the application, the room server called the triangulation script only if there was a subscriber asking for the child. We determined this using subscriber discovery and looking at the ContentFilteredTopic filter parameters.

Finally, we implemented a redundancy mechanism to handle the room server failure. Each minicomputer in the room created a publisher and set its USER_DATA value to the room and a default (unique) priority ID. If one of the minicomputers detected that it had the lowest ID in its room, it started the server application, and acted as the server until a new minicomputer with a lower ID appeared.

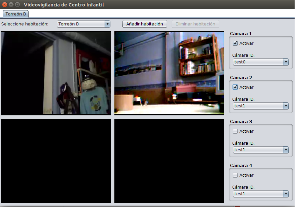

User Applications

We developed two end-user applications. The first one will be used by the parents to see their children in the nursery school. The second program will be used by nursery employees to see all the cameras in real time, manage parent access (add and remove) and automatically handle attendance control.

Final Thoughts

We had to cope with two big problems in the challenge:

- Getting the RSSI values: we bought a very low quality, low-cost Bluetooth device (around $5). The signal had a lot of errors and noise. We had to develop an algorithm to optimize the values, reducing that error from 3 to 0.5 meters. We could not find any library for low-level operations with Bluetooth devices in Java (we finally used pybluez). We had to communicate using Python and Java programs.

- Video encoding: it was not easy to find a library that allowed us to get the encoded video buffer. It was even more difficult to optimize the elements in the GStreamer 0.10 pipeline to work at maximum performance in the Raspberry Pi. With the final configuration, the image delay is around 3-5 seconds. For better performance, we plan to replace the Raspberry Pi with a similarly priced MK802 III device, which includes Wi-Fi and a dual-core Cortex A9 processor.

RTI Connext DDS saved us a lot of work by implementing networking, data serialization, and quality of service mechanisms. We thank our engineering school and RTI for giving us the opportunity and resources to successfully address this business challenge.

Learn More:

Autonomous Vehicle Production »

Posts by Tag

- Developers/Engineer (303)

- Connext DDS Suite (186)

- IIoT (125)

- News & Events (122)

- Standards & Consortia (122)

- Technology (74)

- Leadership (73)

- 2020 (54)

- Automotive (49)

- Aerospace & Defense (47)

- 2023 (35)

- Cybersecurity (33)

- Culture & Careers (31)

- Healthcare (31)

- 2022 (29)

- Connext DDS Tools (21)

- 2021 (19)

- Connext DDS Pro (19)

- Energy Systems (16)

- Military Avionics (15)

- FACE (13)

- Connext DDS Micro (12)

- JADC2 (10)

- ROS 2 (10)

- 2024 (9)

- Transportation (9)

- Connext DDS Cert (7)

- Databus (7)

- Connectivity Technology (6)

- Oil & Gas (5)

- Connext Conference (4)

- Connext DDS (4)

- RTI Labs (4)

- Case + Code (3)

- FACE Technical Standard (3)

- Research (3)

- Robotics (3)

- #A&D (2)

- Edge Computing (2)

- MDO (2)

- MS&T (2)

- Other Markets (2)

- TSN (2)

- ABMS (1)

- C4ISR (1)

- ISO 26262 (1)

- L3Harris (1)

- LabView (1)

- MathWorks (1)

- National Instruments (1)

- Simulation (1)

- Tech Talks (1)

- UAM (1)

- Videos (1)

- eVTOL (1)

Success-Plan Services

Success-Plan Services